Using Stockfish to automatically find Tactics Puzzles

Can an engine find a tactical puzzle by itself?

When engines say that a position is much better for one side, it's not always clear if this is a strategic advantage or if there are some hidden tactics in the position.

Differentiating between different kinds of positions is very difficult, since it's hard to formulate what factors make a position tactical or strategic. I tried something along these lines with the sharpness score and recently I had a different idea that I wanted to test. Specifically, it's about how the evaluation of the engine changes as the depth increases.

Idea

The evaluation function of engines only looks at the current position and doesn't take any tactics into account. The engine is only able to evaluate future positions with its search function.

My idea was that Stockfish on lower depths might therefore not be able to spot a tactic since it can't look deep enough to see that it would win material. This would keep the evaluation quite low, until Stockfish is able to calculate deep enough to see that there is a tactic in the position.

Take the following position as an example:

The position looks somewhat level, until you realise that the black knight on d4 can just be take. Here is a different example:

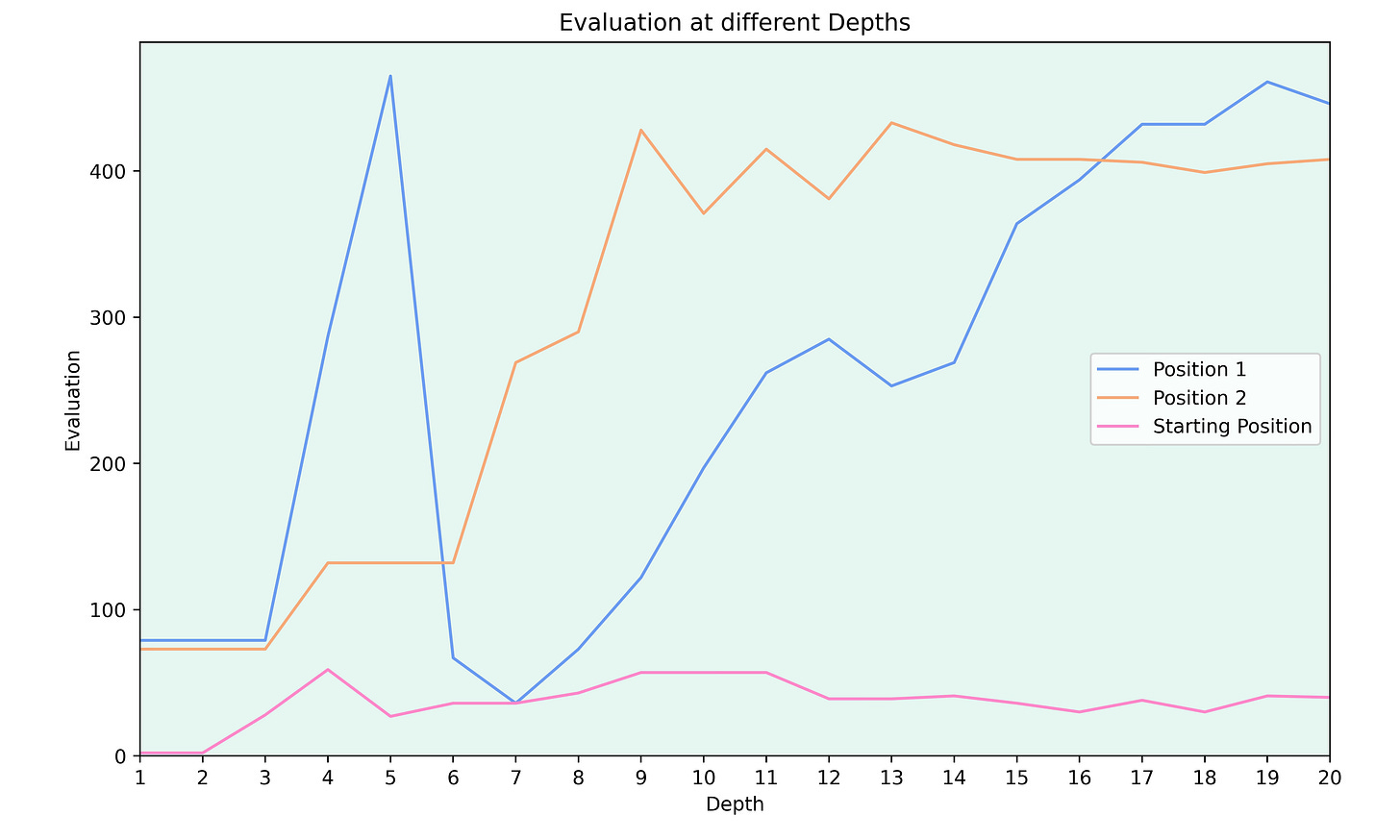

Again, on first sight this position looks level. But black can win material starting with 1…Bxb3. To see if my concept would work, I looked at the evaluation of these positions at different depths:

Both positions from above (in blue and orange) start off with a relatively low evaluation and the evaluation rises as the depth of the engine increases. To have a comparison, I added the evaluation of the starting position and since there aren’t any tactics in the position, the evaluation stays roughly constant.

The early spike in the evaluation of the first position might be because the engine doesn’t calculate deep enough to see that the knight can be recaptured by the pawn. I’ll come back to these spikes later.

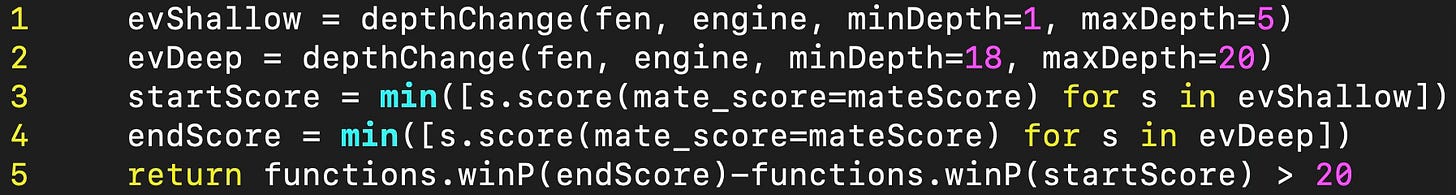

Implementation

I implemented this by analysing the position at depths 1 to 5 and taking the lowest score (I looked at a range of depths to avoid spikes seen above). Then I calculated the minimal score of the position at depth 18 to 20. I compared the winning percentages of these two evaluations and if the winning percentage at higher depths was at least 20% higher, the position was deemed to be a tactic.

Testing

Identifying known tactics

Firstly, I decided to see if this method actually works to identify tactics. To do this, I downloaded the Lichess tactics database and looked at each position to see if my program identifies it as a tactic.

I tested 2,000 positions and my program identified 99.5% of them as tactics. The other 0.5% where positions were the engines found the solutions at low depths, like positions with mate in 1.

So the program is able to identify tactical puzzles with high accuracy. Now the questions is if strategic positions with an advantage for one side get mistakenly identified as tactics.

Strategic positions

Since hand-picking strategic positions is very time consuming, I only looked at a couple of positions and hoped that any problems which aren't covered in these positions get discovered when looking at whole games.

I looked at positions with a big advantage for one side, like the following position from the game Mareco-Aravindh, 2013:

White is clearly much better, but not because of tactical reasons. So my hope was that White's advantage will be seen at low and high depths. Here is how the evaluation changes with the depth for this position:

Already at depth 1, Stockfish identifies that White is much better. Since there isn’t a big evaluation difference between low and higher depths, the position doesn't get classified as a tactic by my program, which is what I wanted.

Finding tactics in my blitz games

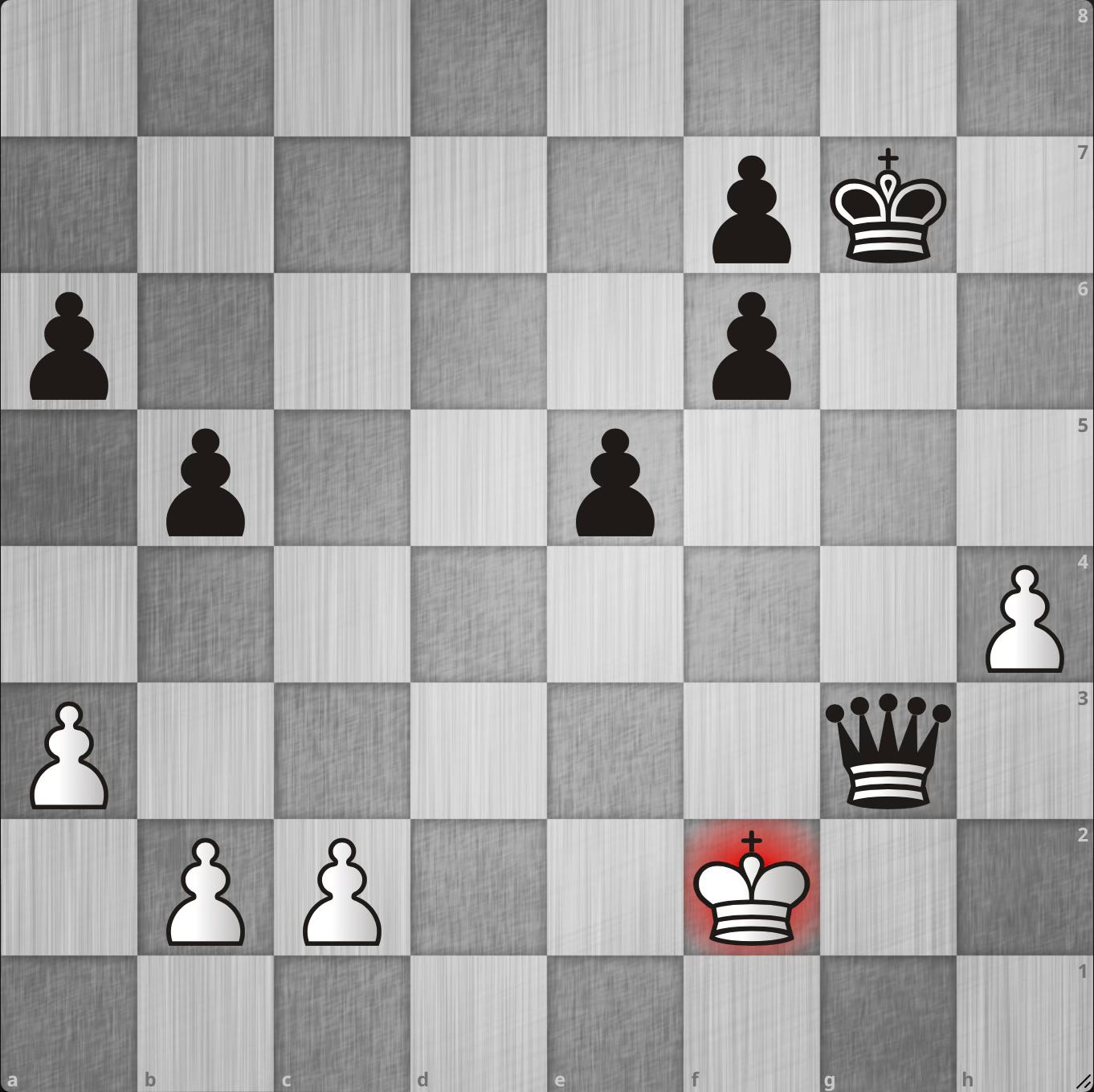

Finally, I decided to use this method to find tactics in my blitz games. I dowloaded my 200 most recent blitz games and went through them with the program to find any tactics. You can find all the tactics in this Lichess study and here is one example:

White’s best move is 1.Rg7 and due to the threat of 2.Rcc7, Black has to give up their queen.

Limitations

I was positively surprised that this approach worked so well, but it has also its limitations.

There were many positions from my blitz games which the program pointed out but they weren’t tactics. I identified a couple of different position types and problems with this approach.

Endgames

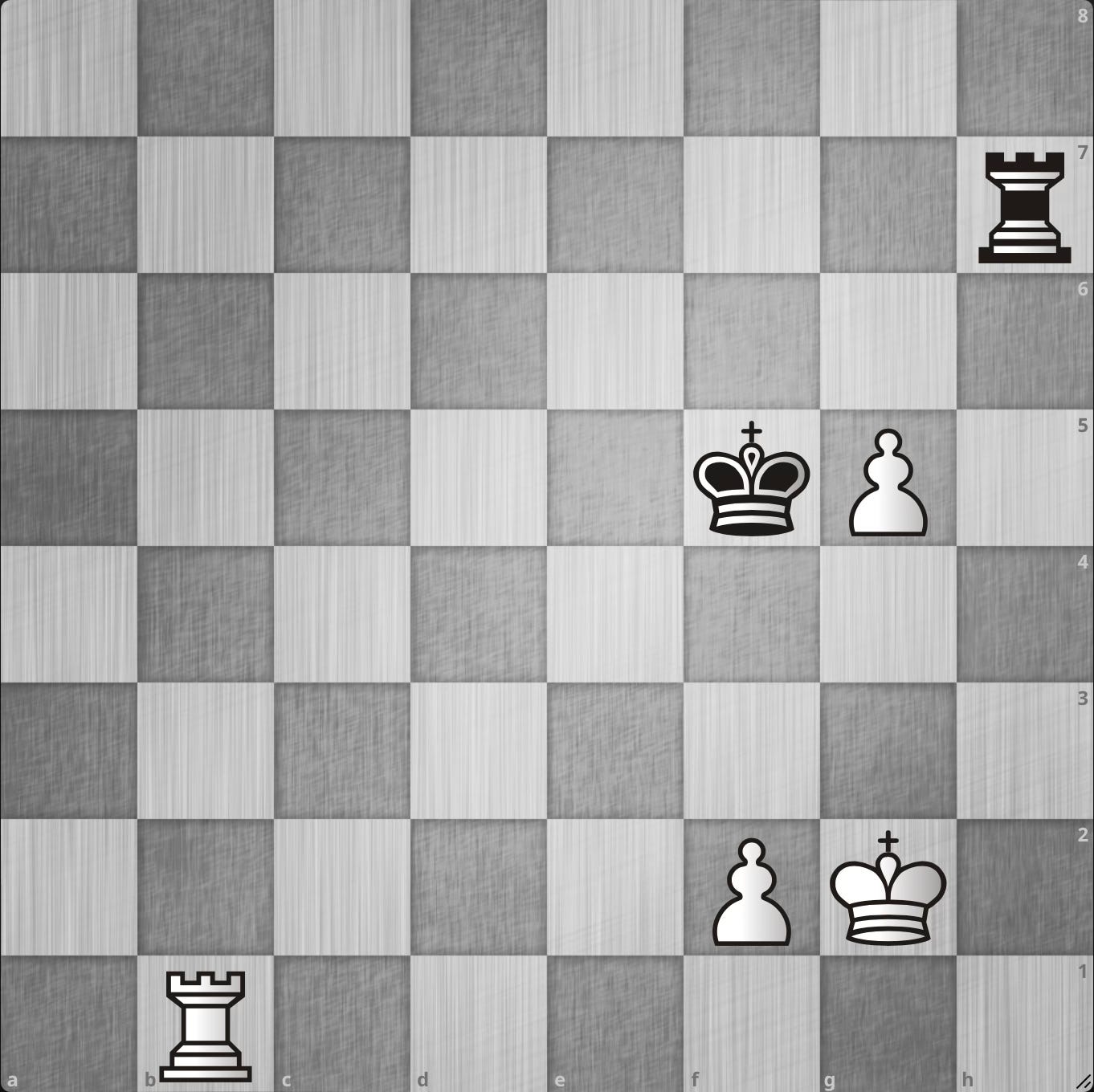

The biggest problem with this approach seems to be endgames. I got many endgame positions which didn't contain any tactics. It seems like the evaluation at low depth is pretty poor at endings which leads to big evaluation disparities. Here is one example that got identified by the program:

Here White takes the queen and is winning in the king and pawn endgame. I think that Stockfish misevaluated the king and pawn endgame at lower depths, maybe because White is a pawn down. This brings us nicely to the next small problem.

Material imbalance

This method also doesn't handle material imbalances well, but after thinking about it, it makes a lot of sense. When one side is down in material, the shallow search will say that they are clearly worse. But the deeper search might see that there is enough compensation, so it seems like there is a tactic in the position.

So the program works as expected, but the resulting positions aren’t really tactics.

Evaluation spikes

As mentioned earlier, there also seem to be some evaluation spikes at different depths. One example I noticed was the following position:

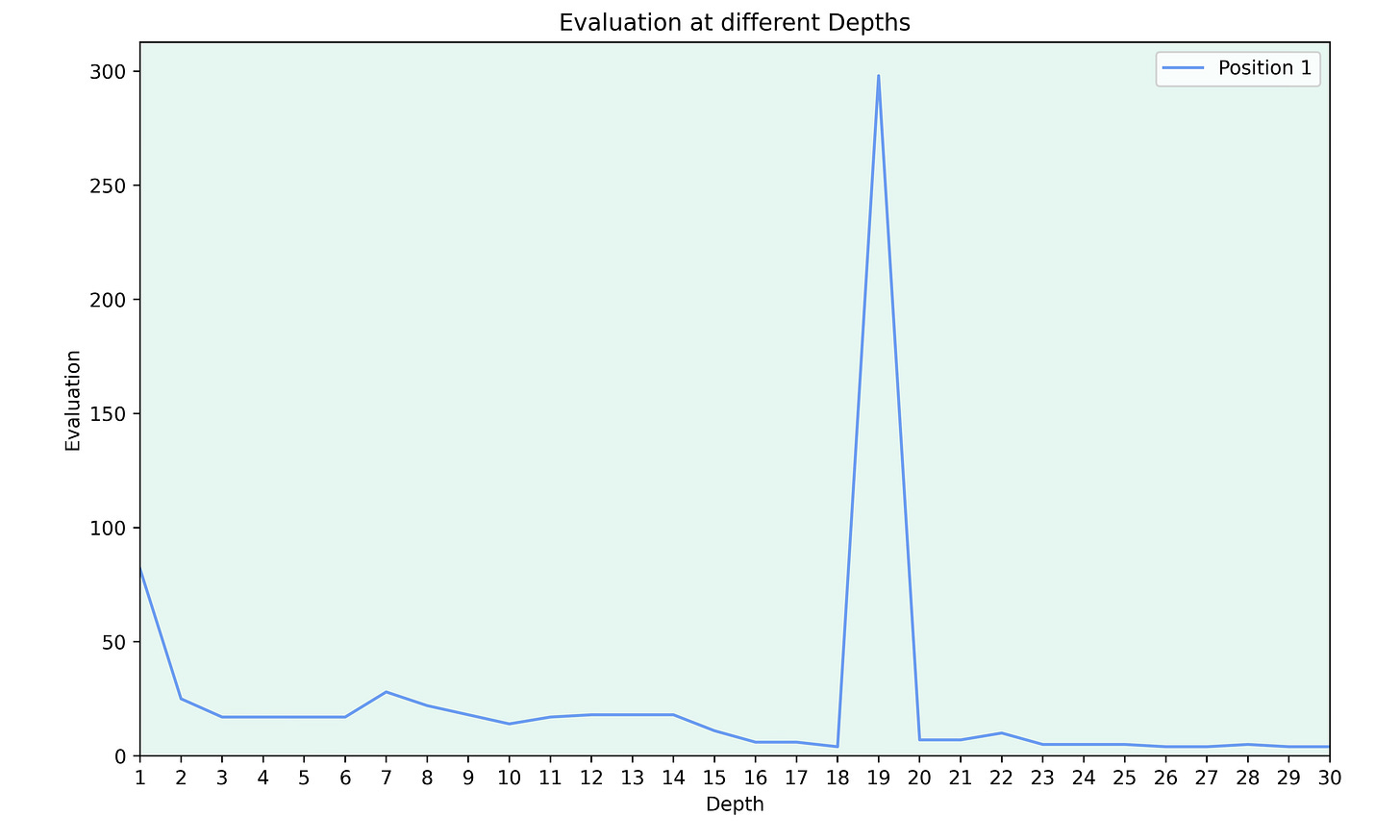

White is two pawns up, but the position is a drawn rook endgame. Stockfish mostly agrees, but the evaluation graph looks strange:

For some reason Stockfish briefly thinks that the position is winning at depth 19.

While analysing positions with multiple threads, I also noticed that the evaluation can change a lot from one run to the next. I knew that Stockfish isn't deterministic, but I was very surprised by the difference this can make. The spike in the graph above isn't always there, so sometimes this position is correctly identified as a draw at all depths and sometimes there is such a spike at a specific depth. Using only one thread makes the evaluations consistent across multiple runs, but also slows down everything.

For all other graphs in this post, I used Stockfish with 1 thread to get consistent results, but the analysis on my blitz games was done with multiple threads, so the results may vary.

Conclusion

I think that this approach worked quite well and at least some of the problems can be fixed by excluding certain positions like tablebase endings, although this obviously isn't ideal. One can also easily ensure that the side to play isn’t down in material in the starting position.

There are other approaches to similar problems I'd like to explore, so I only wanted this to be a proof of concept and didn’t want to spend a lot of time on different manual filters.

If you have any improvement ideas or can think of other approaches to identify tactics, let me know.

Great idea. ! is the code open source? is there a way other people can reproduce the same experiement? Thanks

Could this inspire a new kind of 'evaluation' puzzle?

Take a position with equal material for both sides. The evaluation is clearly better for one side at low and high depths. Ask the human puzzle solver to identify which side is better?

Maybe it'll be too easy? Plus guessing will be a thing.