Ever since I've tried to look at the sharpness of chess positions, I was interested in the question of how this sharpness relates to the move accuracy in real games. After analysing many OTB games, I decided to finally look at these scores together.

Note that I'm not a statistician or data scientist, so if you have any improvement ideas for analysing the data, please let me know.

Data

I looked at 385 grandmaster games from recent super tournaments and the Sharjah Masters. Since the sharpness score explodes for very one sided positions, I decided to only look at moves where no side had more than a +3 advantage (according to Stockfish).1 This left me with about 25,000 positions to analyse.

In order to get the accuracy and sharpness of the moves, I went through each game, calculated the sharpness for each game position and the accuracy of the move that was made in that position.

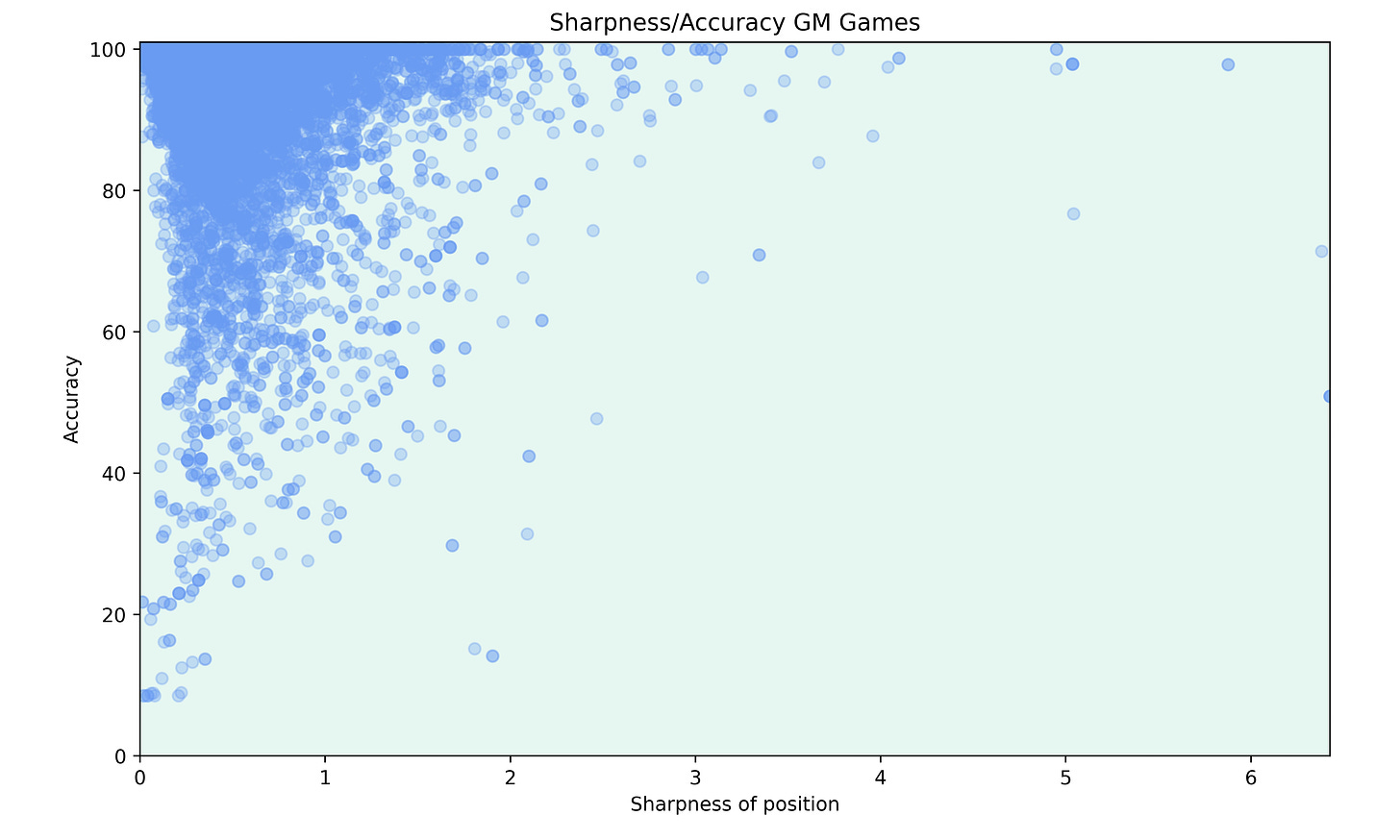

Firstly, I decided to make a scatter plot to get a visual representation of the relation between sharpness and accuracy.

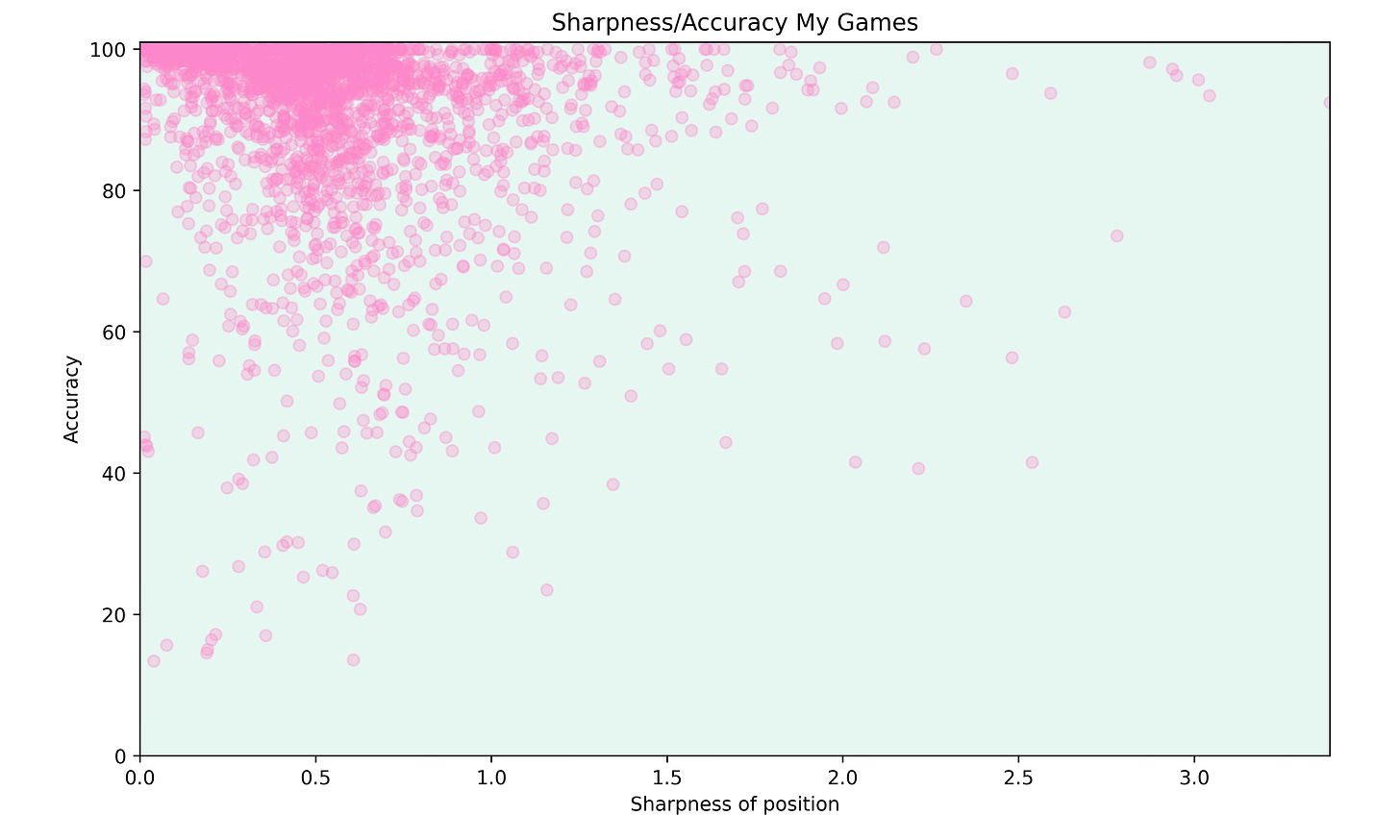

I thought that it might also be interesting to look at some of my own games. So I analysed 42 of my classical games and got the following plot for them.

In general, there seems to be a slight relation between sharpness and accuracy, but the datapoints seem a bit all over the place.

I think that there are multiple reasons for this. Firstly, players often blunder in positions which aren't too complex. This can have multiple reasons like time-trouble, trying to push too much for a win or simply a lack of concentration. I don’t think that there is any way to account for these factors when looking at the data.

Another reason might be opening theory. Many theoretical lines are very sharp, so if the players know the computer lines heart, they won't make any mistakes in these lines. I thought about filtering out book moves (as discussed in a previous post) but the resulting data was even more confusing. Since there is no way of knowing when a player was out of book (or when a player was thinking that they are still in book, but in fact mixed up their lines), I decided against excluding any opening moves.

Looking at Sharpness Ranges

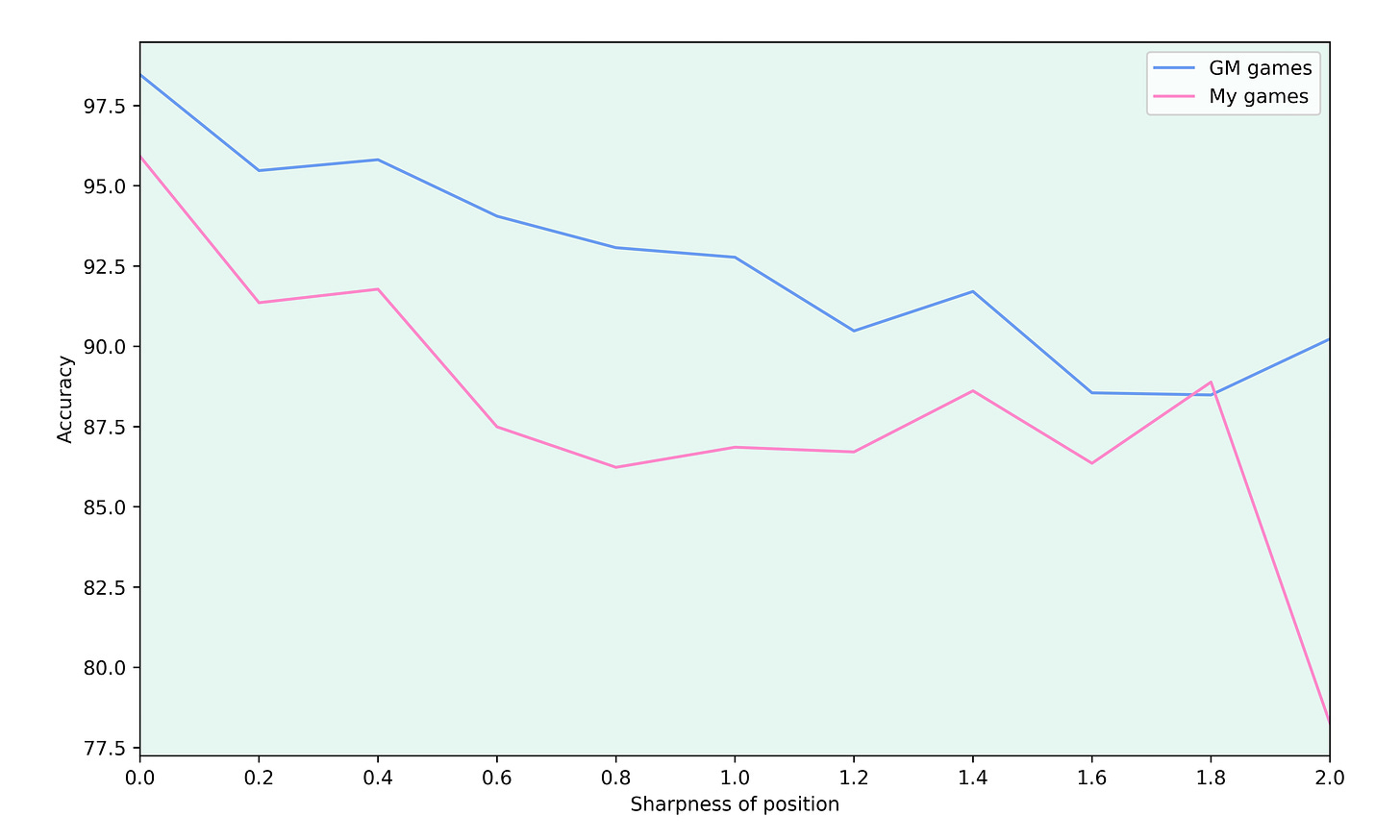

Since the sharpness is a continuous score, I thought that it might be helpful to group the sharpness values into discrete buckets in order to get a clearer picture of the data. After all, some minuscule change in the sharpness shouldn’t really effect the accuracy of play in the position.

I decided to split all sharpness values below 2 into intervals of length 0.2, so the first interval is 0-0.2, the second 0.2-0.4 and so on. I grouped all sharpness values above 2 together since there aren't many positions with such a high sharpness.

For each of these sharpness ranges, I calculated the average accuracy of all moves in the range. This lead to the following plot:

This shows a much stronger relation between the accuracy and the sharpness. In the grandmaster games, there is a clear downwards trend. In my games, the accuracy is highest for the lowest sharpness values and stabilises around a sharpness of 0.8. A reason for this might be that lower level games are more random, so mistakes tend to happen in any kind of position.

However, I'm unsure if this is the best way to look at such a relation. One problem is that the different sharpness ranges are completely arbitrary and the picture might change if the ranges are chosen differently. For example, the scatter plot of my games show that there were some very accurate moves in positions with a sharpness score between 2.7 and 3. So in this range, the average accuracy will probably be higher in the 2.8-3 range than in the 1.8-2 range.

Conclusion

Overall, I found it very interesting to look at the data, but it also feels like I have more questions now than at the beginning of the project. I’m particularly unsure if book moves skew the data and if so, how to best account for them. As mentioned in the beginning, if you have any suggestions or improvement ideas, please let me know.

One future idea could be to use such an analysis of real games and try to create a sharpness sore that closer reflects the likelihood of humans making mistakes in a position. But in order to do this, one would probably need to look at way more games and maybe split the score based on the level of the players.

I tried to tweak the sharpness score to prevent it from blowing up for one-sided positions, but this had many consequences for all kinds of positions and I didn’t know how to evaluate which score works best.